With the application that I’m helping “make fast” one of the optimizations identified by an architect was to use net.pipe or net.tcp on the application tier. This was because the services on that tier call into each other, and waste a lot of time doing all the encapsulation that comes with wsHttpBinding.

We had tried to first use net.pipe because it is incredibly fast and it is all local. However, because of how they do security here, it didn’t work. Next up, net.tcp.

Overall, it wasn’t that difficult to setup the services with dual bindings (wsHttpBinding for calls from other servers and net.tcp for calls originating from the same server). Granted, there were a lot of changes, and since all the configs are manually done here, was very error-prone. I will be glad not to do any detailed configurations for a while.

Anyways, we did our testing of the build that incorporated it through 3 different environments, and over the weekend it went into Production. Of course, that is when the issues started.

Bright and early Monday morning, users were presented with a nice 500 error after they logged into the application. On the App tier servers we were getting the following error in the Application event log with every request to the services:

Log Name: Application

Source: ASP.NET 4.0.30319.0

Event ID: 1088

Task Category: None

Level: Error

Keywords: Classic

User: N/A

Description:

Failed to execute request because the App-Domain could not be created. Error: 0x8000ffff Catastrophic failure

Well, since it mentioned the App Domain, we ran an IISReset and all was well. I didn’t think too much into it at the time and only did some cursory searching as it was the first time we saw it. However, today it happened again.

Our app is consistently used between 7AM and about 7PM, but during the night it isn’t used at all. This is when the appPool is scheduled to be recycled (3AM). It had appeared as if the recycle was what was killing us, as only the App Domain is recycled and not the complete worker process.

Immediately we had the guys here remove the nightly recycle and change it to a full IISReset. At least that way we had a workaround until we could determine the actual root cause, come up with a fix, and test said fix. However, it didn’t take long to determine the actual root cause…

One of the interesting things about this issue, was after it started happening in Production, a few of the other environments started exhibiting similar symptoms: Prod-Like and a smaller, sandbox environment. Mind you, neither of these environments had these issues during testing.

So, I took some time to dive in and actually figure out the problem. At first I thought it was because there were some metabase misconfigurations in these environments. I wouldn’t say that all of these environments were pristine, nor consistent between each other. I found a few things, but nothing really stood out…until…

While I was doing diffs against the various applicationHost.config files, NotePad++ told me it had been edited and needed to be refreshed (them removing the appPool recycles). However, as soon as this happened the 500 errors started. It didn’t help they did both machines at the same time which took down the whole application, but that’s another story.

This led me to believe that it wasn’t something within the configuration, but it also showed me how to reproduce it, at least part way. The part that I was missing was that I had to first hit the website to invoke the services and then change the configs causing an app domain recycle.

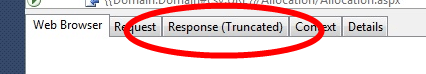

Then I attempted to connect to the worker process with windbg to see what it was doing. However, that was a complete failure as nothing actually happened to the process. No exceptions being thrown, no threads stuck, etc. It appeared to just sit there.

A bit of searching later led me to an article that had the exact same issues we were having, and that changing from a Classic to Integrated appPool fixed it. However, it didn’t mention why. Of course I tried it and it worked. To appease the customer’s inquiries I knew I needed to find out why though.

I still don’t have a great solution, but apparently net.tcp and WAS activation has to be done in Integrated mode. If it isn’t, you get the 500 error. But ours works fine until the app domain is recycled. Well, according to SantoshOnline, “if you are using netTcpBinding along with wsHttpBinding on IIS7 with application pool running in Classic Mode, you may notice the ‘Server Application Unavailable’ errors. This happens if the first request to the application pool is served for a request coming over netTcpBinding. However if the first request for the application pool comes for an http resource served by .net framework, you will not notice this issue.”

That would’ve been nice to know from Microsoft’s article on it, or at least a few more details. I remember reading an article about the differences between Integrated and Classic, but I sure don’t remember anything specific to this.

Anyways, hope this helps someone who runs into the same issue…