This is a continuation of my previous post on the half-baked core features of load testing in Visual Studio 2010. We had been progressing fairly well, but with some of the new fixes that have gone into the application, we have reached new issues.

I would like to preface this with our application is by no means great. In fact, it is pretty janky and does a lot of incredibly stupid things. Having a 1.5MB viewstate (or larger) is an issue, and I get that. However, the way that VS handles it is plain unacceptable.

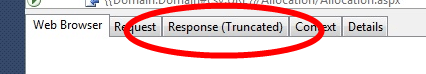

With that said, I’m sure you can imagine where this is going. When running an individual webtest each request cannot be larger than 1.5MB. This took a bit of time to figure out as many of our tests were simply failing. The best part of this is that we have a VIEWSTATE extract parameter (see #1 on the previous post), and the error we always get is that the VIEWSTATE cannot be extracted. Strange, I see it in the response window when I run the webtest. Oh, wait, does that say Response (Truncated)? Oh right, because my response is over 1.5MB.

Oh, and that’s not just truncated for viewing, that’s truncated in memory. Needless to say this has caused a large amount of issues for us. Thankfully, VS 2010 is nice and you can create plugins to get around this (see below). The downside is that VS has obviously not been built to run webtests with our complexity, and definitely not bypassing the 1.5MB sized response.

public override void PreWebTest(object sender, PreWebTestEventArgs e)

{

e.WebTest.ResponseBodyCaptureLimit = 15000000;

e.WebTest.StopOnError = true;

base.PreWebTest(sender, e);

}

If you use this plugin, be prepared for a lot of painful hours in VS. I am running this on a laptop with 4GB of RAM, and prior to the webtest running devenv.exe is using ~300MB of RAM. However, during the test, that balloons to 2.5GB and pegs one of my cores at 100% utilization as it attempts to parse all the data. Fun!

The max amount of data we could have in the test context is 30MB. Granted, as mentioned earlier, this is a lot of text. However, I fail to see how it accounts for almost 100x that amount in RAM.

Thankfully, in a load test scenario all that data isn’t parsed out to be viewed and you don’t have any of these issues. You just need to create perfect scripts that you don’t ever need to update. Good luck with that!

Oh and as an update, for #3 in my previous post, I created a bug for it, but haven’t heard anything back. Needless to say, we are still having the issue.

And I realize that we’ve had a lot of issues with VS 2010, and I get angry about it. However, I want to re-iterate that no testing platforms are good.

Comments

One response to “More Visual Studio 2010 Performance Testing "Fun"”

It appears the bug you entered (#3 from your previous post) was closed as “Not Reproducable”.

Works on my machine!